Full Text

Climate change, and other human-induced impacts, are severely increasing the intensity and occurrences of algal blooms in coastal regions (IPCC, 2022). Ocean warming, marine heatwaves, and eutrophication promote suitable conditions for rapid phytoplankton growth and biomass accumulation. An increase in such primary producers provides food for marine organisms, and phytoplankton play an important global role in fixing atmospheric carbon dioxide and producing much of the oxygen we breathe. But harmful algal blooms (HABs) can also form, and they may adversely affect the ecosystem by reducing oxygen availability in the water, releasing toxic substances, clogging fish gills, and diminishing biodiversity. Understanding, forecasting, and ultimately mitigating HAB events could reduce their impact on wild fish populations, help aquaculture producers avoid losses, and facilitate a healthy ocean.

Phytoplankton respond rapidly to changes in the environment, and measuring the distribution of a bloom and its species composition and abundance is essential for determining its ecological impact and potential for harm. Satellite remote sensing of chlorophyll concentration has been used extensively to observe the development of algal blooms. Although this tool has wide spatial and temporal (nearly daily) coverage, it is limited to surface ocean waters and cloud-free days. Microscopic analyses of water and net samples allow much closer examination of the species present in a bloom and their abundance, but this is a time-consuming process that collects only discrete point samples, sparsely distributed in space and time. Neither of these methods alone captures the rapid evolution of algal blooms, the spatial and temporal patchiness of their distributions, or their high local variability. In situ optical devices and imaging sensors mounted on mobile platforms such as autonomous underwater vehicles (AUVs) and uncrewed surface vehicles (USVs) capture fine-scale temporal trends in plankton communities, while uncrewed aerial vehicles (UAVs) complement satellite remote sensing. Use of such autonomous platforms offers the flexibility to react to local conditions with adaptive sampling techniques in order to examine the marine environments in real time.

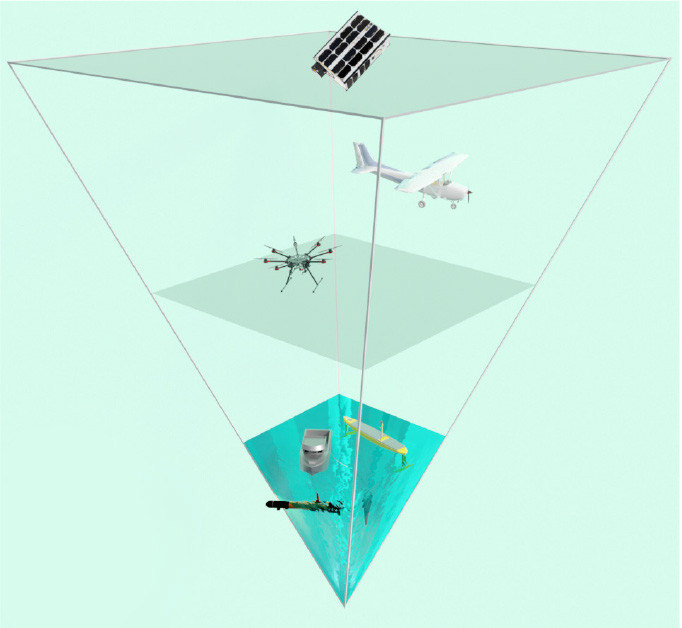

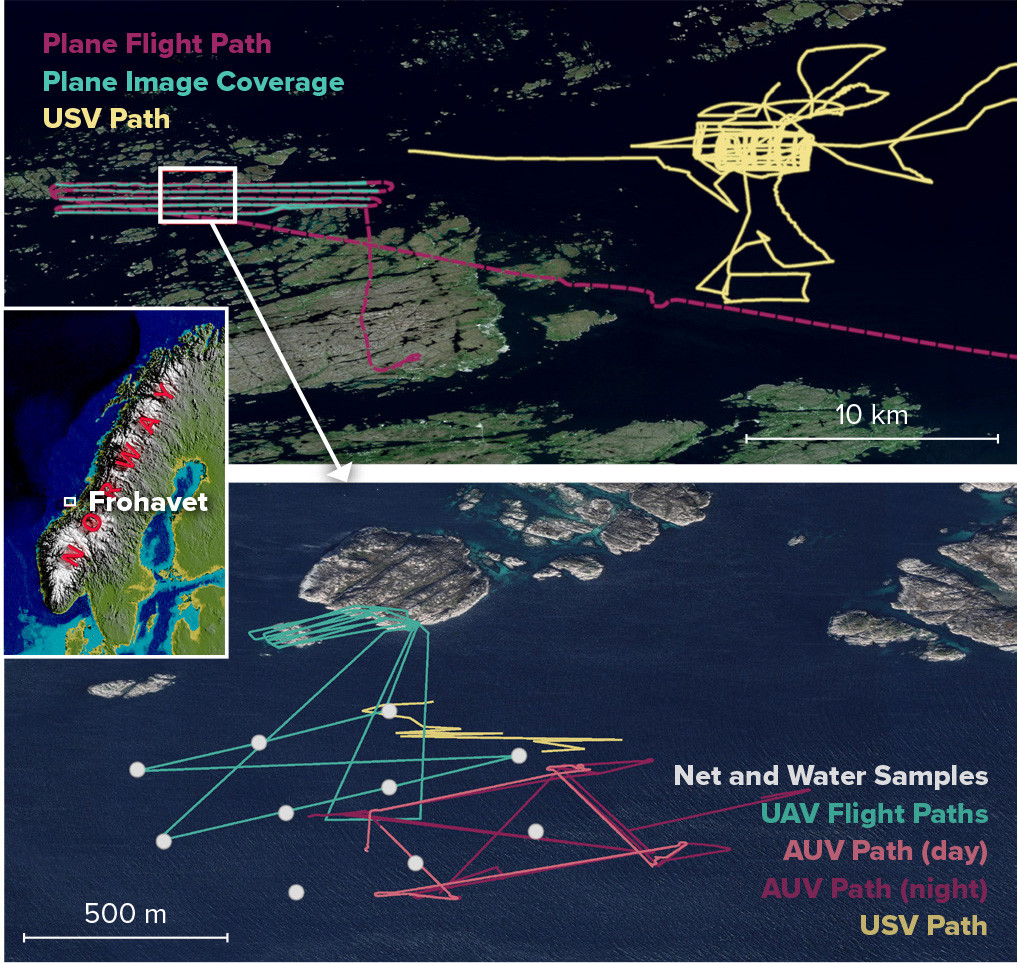

Here we present an integrated approach to observing blooms—an “observational pyramid”—that includes both classical and newer, complementary observation methods (Figure 1). We aim to identify trends in phytoplankton blooms in a region with strong aquaculture activity on the Atlantic coast of mid-Norway. Field campaigns were carried out in consecutive springs (2021 and 2022) in Frohavet, an area of sea sheltered by the Froan archipelago (Figure 2). The region is a shallow, highly productive basin with abundant fishing and a growing aquaculture industry. Typically, there are one or more large algal blooms here during the spring months. We use multi-instrumentation from macro- to a microscale perspectives, combined with oceanographic modeling and ground truthing, to provide tools for early algal bloom detection.

|

|

|

|

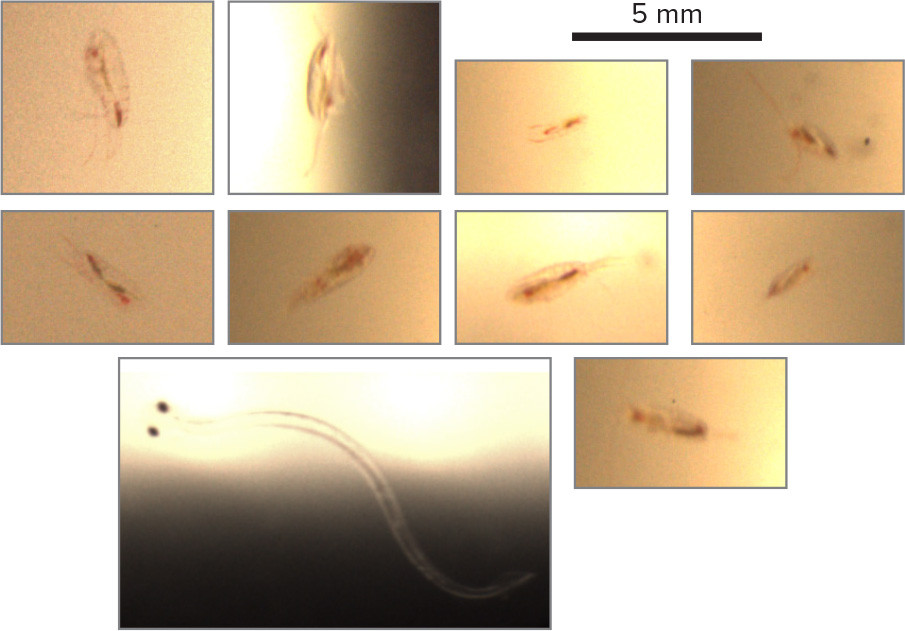

At the very largest scale, in 2021 we used multispectral images from the Sentinel-2 and PRISMA satellites, and in 2022 from the Norwegian University of Technical and Natural Sciences Centre’s Hypso-1 hyperspectral imaging satellite (Grøtte et al., 2021) to monitor ocean color in the area of interest over several weeks surrounding the main fieldwork. A long-endurance USV, equipped with a payload of ocean sensors including a CTD, an ADCP, an oxygen optode, and a fluorometer also monitored the area before, during, and after the missions. SINMOD, a coupled physical-chemical-biological ocean model, was used to simulate environmental conditions in the area of interest (Figure 3). During the fieldwork, the satellite imagery was supplemented by hyperspectral data collected by cameras mounted on a plane (in 2021) and on a UAV for covering smaller areas at higher resolution; these sensors are also less affected by cloud cover. At the same time, we launched an AUV (Saad et al., 2020) that covered hundreds of cubic meters of ocean while taking high-magnification underwater images (Figure 4) and collecting CTD and chlorophyll data. Finally, we deployed a Niskin water sampler at a number of locations within the AUV and UAV observation area and at several depths for microscopy and eDNA analysis.

|

|

|

|

The multisensor, multiscale operations will allow us to assess phytoplankton health and divide the growth of their populations into stages: pre-bloom (cells begin to grow), bloom phase (exponential growth), and post-bloom (grazing and decay). While remote sensing provides a broad view of such growth through ocean color, net and water sampling give us insight into the changing species composition within a bloom. Simultaneous AUV imaging provides information on grazers that feed on the phytoplankton—thought to be an important factor in the evolution of algal blooms. Multisensor operations also provide ground truth for remote sensing and help us link hyperspectral observations from novel aerial and satellite sensors with conditions in the water. All of these data sources will be used to validate and improve the SINMOD model of the ocean, allowing it to better predict the occurrence and composition of HABs and other algal blooms. Reliable prediction and automated observation also improve monitoring by telling us where and when expensive fieldwork with small-scale, high-resolution sensors can be most effective.

Observational efforts that combine state-of-the-art monitoring technology, multi- and hyperspectral remote sensing, ecosystem modeling, traditional water sampling, and integrated taxonomy via microscopic and molecular (eDNA) species identification are paramount for a holistic understanding of bloom formation, as well as of marine primary production overall. Our project demonstrates the advantages of this approach and promises to enable more effective ocean monitoring in the future.